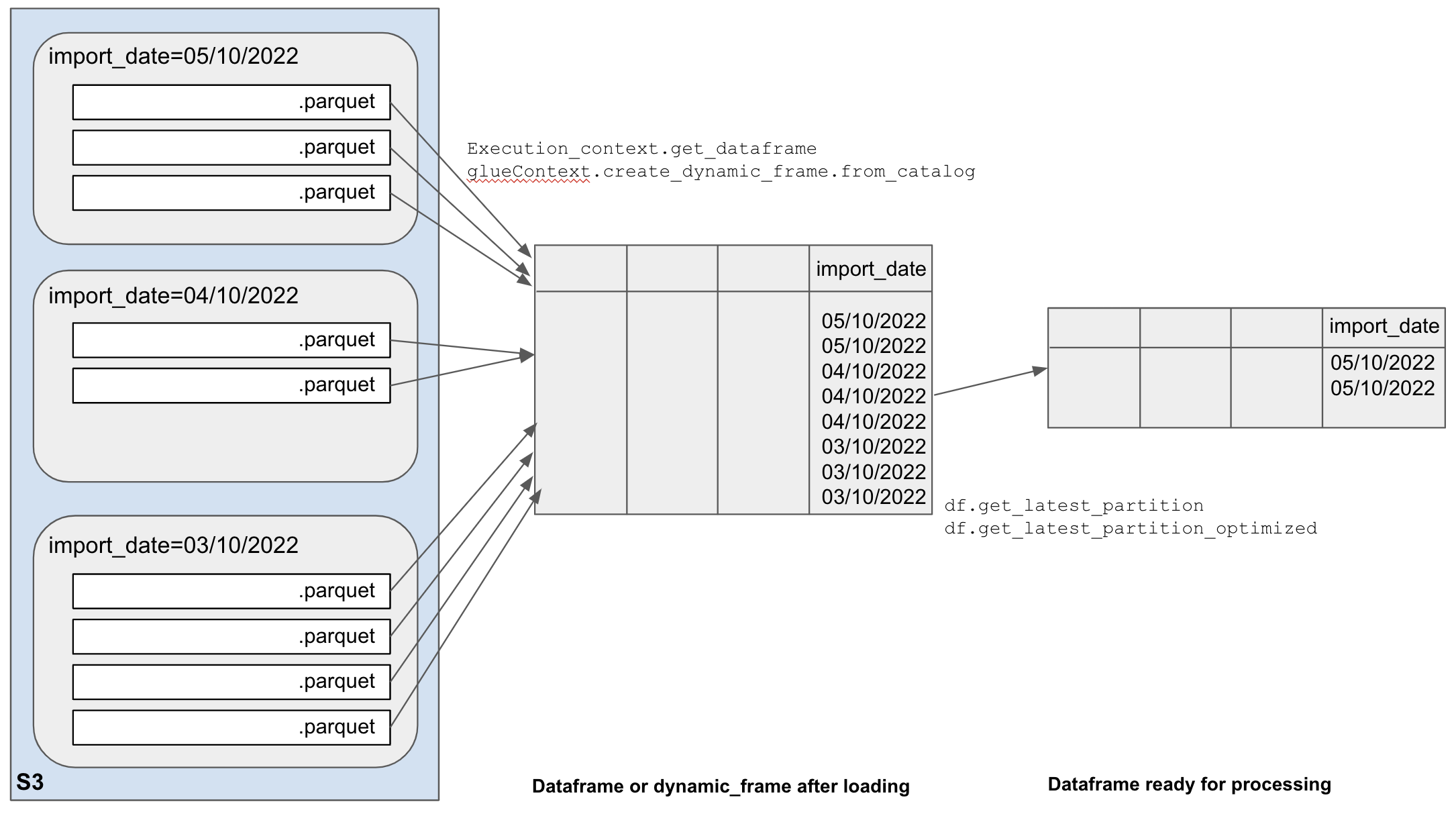

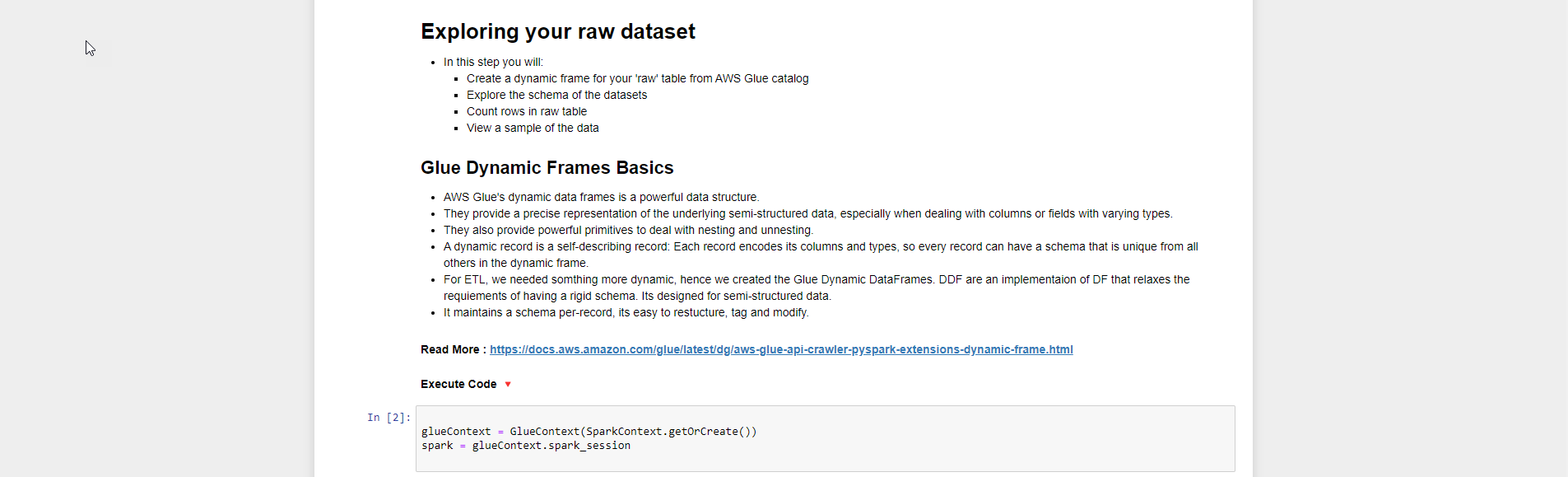

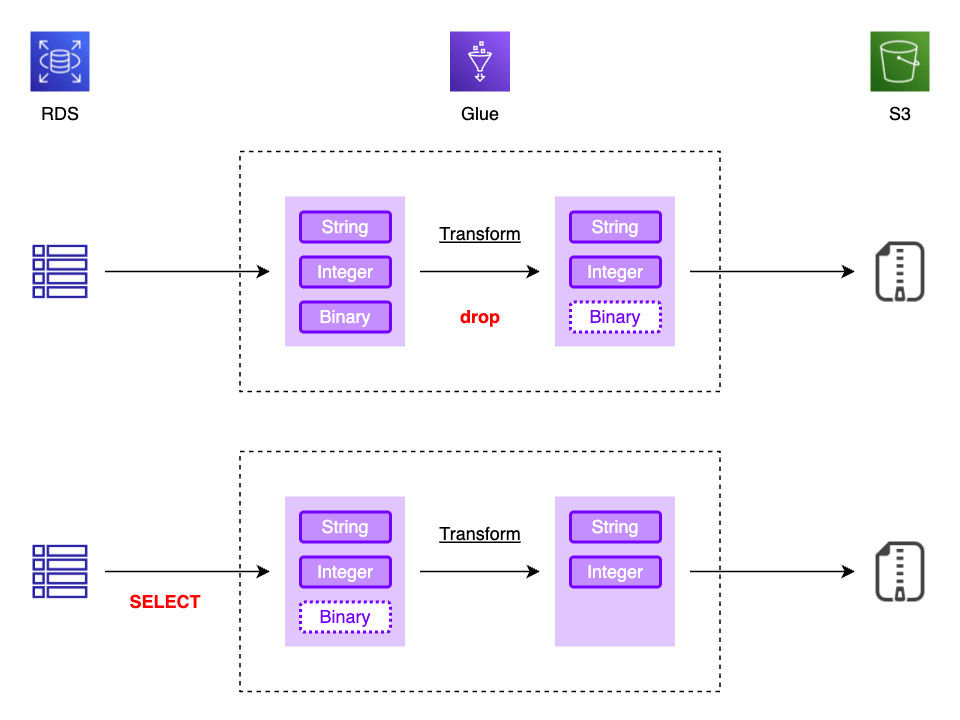

Create_Dynamic_Frame.from_Catalog

Create_Dynamic_Frame.from_Catalog - When creating your dynamic frame, you may need to explicitly specify the connection name. # read from the customers table in the glue data catalog using a dynamic frame dynamicframecustomers = gluecontext.create_dynamic_frame.from_catalog(database =. Use join to combine data from three dynamicframes from pyspark.context import sparkcontext from awsglue.context import gluecontext # create gluecontext sc =. Leverage aws glue data catalog: In this article, we'll explore five best practices for using pyspark in aws glue and provide examples for each. Dynamicframes can be converted to and from dataframes using.todf () and fromdf (). Now, i try to create a dynamic dataframe with the from_catalog method in this way: From_catalog(frame, name_space, table_name, redshift_tmp_dir=, transformation_ctx=) writes a dynamicframe using the specified catalog database and table name. The athena table is part of my glue data catalog. My issue is, if i use create_dynamic_frame_from_catalog (), it is running very slow, where as if i use create_sample_dynamic_frame_from_catalog () with max sample limit as 5 million, it is. # create a dynamicframe from a catalog table dynamic_frame = gluecontext.create_dynamic_frame.from_catalog(database = mydatabase, table_name =. I have a mysql source from which i am creating a glue dynamic frame with predicate push down condition as follows. # read from the customers table in the glue data catalog using a dynamic frame dynamicframecustomers = gluecontext.create_dynamic_frame.from_catalog(database =. This document lists the options for improving the jdbc source query performance from aws glue dynamic frame by adding additional configuration parameters to the ‘from catalog’. Use join to combine data from three dynamicframes from pyspark.context import sparkcontext from awsglue.context import gluecontext # create gluecontext sc =. Try modifying your code to include the connection_type parameter: My issue is, if i use create_dynamic_frame_from_catalog (), it is running very slow, where as if i use create_sample_dynamic_frame_from_catalog () with max sample limit as 5 million, it is. With three game modes (quick match, custom games, and single player) and rich customizations — including unlockable creative frames, special effects, and emotes — every. I have a table in my aws glue data catalog called 'mytable'. Dynamicframes can be converted to and from dataframes using.todf () and fromdf (). I have a mysql source from which i am creating a glue dynamic frame with predicate push down condition as follows. With three game modes (quick match, custom games, and single player) and rich customizations — including unlockable creative frames, special effects, and emotes — every. Use join to combine data from three dynamicframes from pyspark.context import sparkcontext from awsglue.context. I'm trying to create a dynamic glue dataframe from an athena table but i keep getting an empty data frame. Dynamicframes can be converted to and from dataframes using.todf () and fromdf (). # create a dynamicframe from a catalog table dynamic_frame = gluecontext.create_dynamic_frame.from_catalog(database = mydatabase, table_name =. My issue is, if i use create_dynamic_frame_from_catalog (), it is running very. # read from the customers table in the glue data catalog using a dynamic frame dynamicframecustomers = gluecontext.create_dynamic_frame.from_catalog(database =. This document lists the options for improving the jdbc source query performance from aws glue dynamic frame by adding additional configuration parameters to the ‘from catalog’. In this article, we'll explore five best practices for using pyspark in aws glue and. Create_dynamic_frame_from_catalog(database, table_name, redshift_tmp_dir, transformation_ctx = , push_down_predicate= , additional_options = {}, catalog_id = none) returns a. Leverage aws glue data catalog: I have a mysql source from which i am creating a glue dynamic frame with predicate push down condition as follows. From_catalog(frame, name_space, table_name, redshift_tmp_dir=, transformation_ctx=) writes a dynamicframe using the specified catalog database and table name. This document. In this article, we'll explore five best practices for using pyspark in aws glue and provide examples for each. I have a table in my aws glue data catalog called 'mytable'. # read from the customers table in the glue data catalog using a dynamic frame dynamicframecustomers = gluecontext.create_dynamic_frame.from_catalog(database =. I'm trying to create a dynamic glue dataframe from an. This document lists the options for improving the jdbc source query performance from aws glue dynamic frame by adding additional configuration parameters to the ‘from catalog’. My issue is, if i use create_dynamic_frame_from_catalog (), it is running very slow, where as if i use create_sample_dynamic_frame_from_catalog () with max sample limit as 5 million, it is. Leverage aws glue data catalog:. When creating your dynamic frame, you may need to explicitly specify the connection name. # create a dynamicframe from a catalog table dynamic_frame = gluecontext.create_dynamic_frame.from_catalog(database = mydatabase, table_name =. My issue is, if i use create_dynamic_frame_from_catalog (), it is running very slow, where as if i use create_sample_dynamic_frame_from_catalog () with max sample limit as 5 million, it is. Use join. Use join to combine data from three dynamicframes from pyspark.context import sparkcontext from awsglue.context import gluecontext # create gluecontext sc =. I'm trying to create a dynamic glue dataframe from an athena table but i keep getting an empty data frame. Now, i try to create a dynamic dataframe with the from_catalog method in this way: When creating your dynamic. From_catalog(frame, name_space, table_name, redshift_tmp_dir=, transformation_ctx=) writes a dynamicframe using the specified catalog database and table name. I have a table in my aws glue data catalog called 'mytable'. Try modifying your code to include the connection_type parameter: I have a mysql source from which i am creating a glue dynamic frame with predicate push down condition as follows. In this. I have a mysql source from which i am creating a glue dynamic frame with predicate push down condition as follows. From_catalog(frame, name_space, table_name, redshift_tmp_dir=, transformation_ctx=) writes a dynamicframe using the specified catalog database and table name. I have a table in my aws glue data catalog called 'mytable'. I'd like to filter the resulting dynamicframe to. # create a. In this article, we'll explore five best practices for using pyspark in aws glue and provide examples for each. Try modifying your code to include the connection_type parameter: # read from the customers table in the glue data catalog using a dynamic frame dynamicframecustomers = gluecontext.create_dynamic_frame.from_catalog(database =. When creating your dynamic frame, you may need to explicitly specify the connection name. From_catalog(frame, name_space, table_name, redshift_tmp_dir=, transformation_ctx=) writes a dynamicframe using the specified catalog database and table name. My issue is, if i use create_dynamic_frame_from_catalog (), it is running very slow, where as if i use create_sample_dynamic_frame_from_catalog () with max sample limit as 5 million, it is. I'm trying to create a dynamic glue dataframe from an athena table but i keep getting an empty data frame. The athena table is part of my glue data catalog. This document lists the options for improving the jdbc source query performance from aws glue dynamic frame by adding additional configuration parameters to the ‘from catalog’. I'd like to filter the resulting dynamicframe to. # create a dynamicframe from a catalog table dynamic_frame = gluecontext.create_dynamic_frame.from_catalog(database = mydatabase, table_name =. Leverage aws glue data catalog: Now, i try to create a dynamic dataframe with the from_catalog method in this way: I have a table in my aws glue data catalog called 'mytable'. With three game modes (quick match, custom games, and single player) and rich customizations — including unlockable creative frames, special effects, and emotes — every.Optimizing Glue jobs Hackney Data Platform Playbook

AWS Glue create dynamic frame SQL & Hadoop

🤩Day6 📍How to create Dynamic Frame Webpage 🏞️ using HTML 🌎🖥️ Beginners

Chuyển đổi dữ liệu XÂY DỰNG DATALAKE VỚI DỮ LIỆU CỦA BẠN

glueContext create_dynamic_frame_from_options exclude one file? r/aws

AWS Glue DynamicFrameが0レコードでスキーマが取得できない場合の対策と注意点 DevelopersIO

6 Ways to Customize Your Facebook Dynamic Product Ads for Maximum

Glue DynamicFrame 生成時のカラム SELECT でパフォーマンス改善した話

Dynamic Frames Archives Jayendra's Cloud Certification Blog

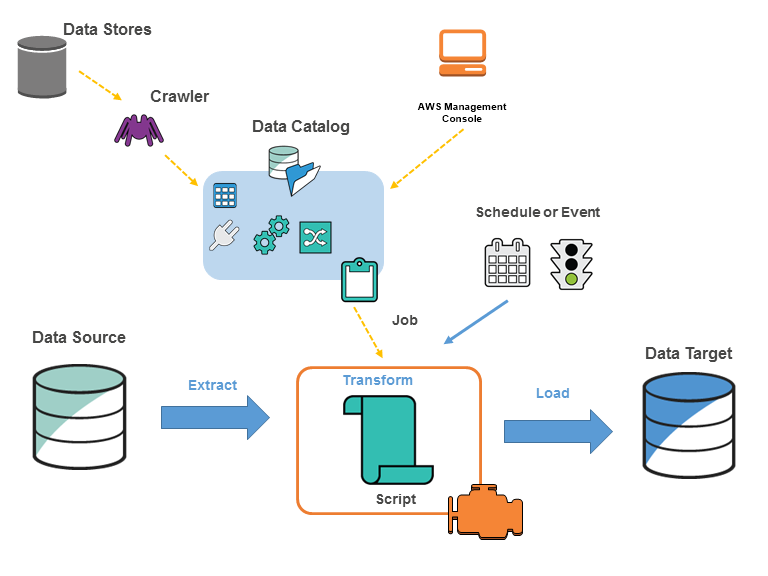

AWS Glueに入門してみた

I Have A Mysql Source From Which I Am Creating A Glue Dynamic Frame With Predicate Push Down Condition As Follows.

Use Join To Combine Data From Three Dynamicframes From Pyspark.context Import Sparkcontext From Awsglue.context Import Gluecontext # Create Gluecontext Sc =.

Create_Dynamic_Frame_From_Catalog(Database, Table_Name, Redshift_Tmp_Dir, Transformation_Ctx = , Push_Down_Predicate= , Additional_Options = {}, Catalog_Id = None) Returns A.

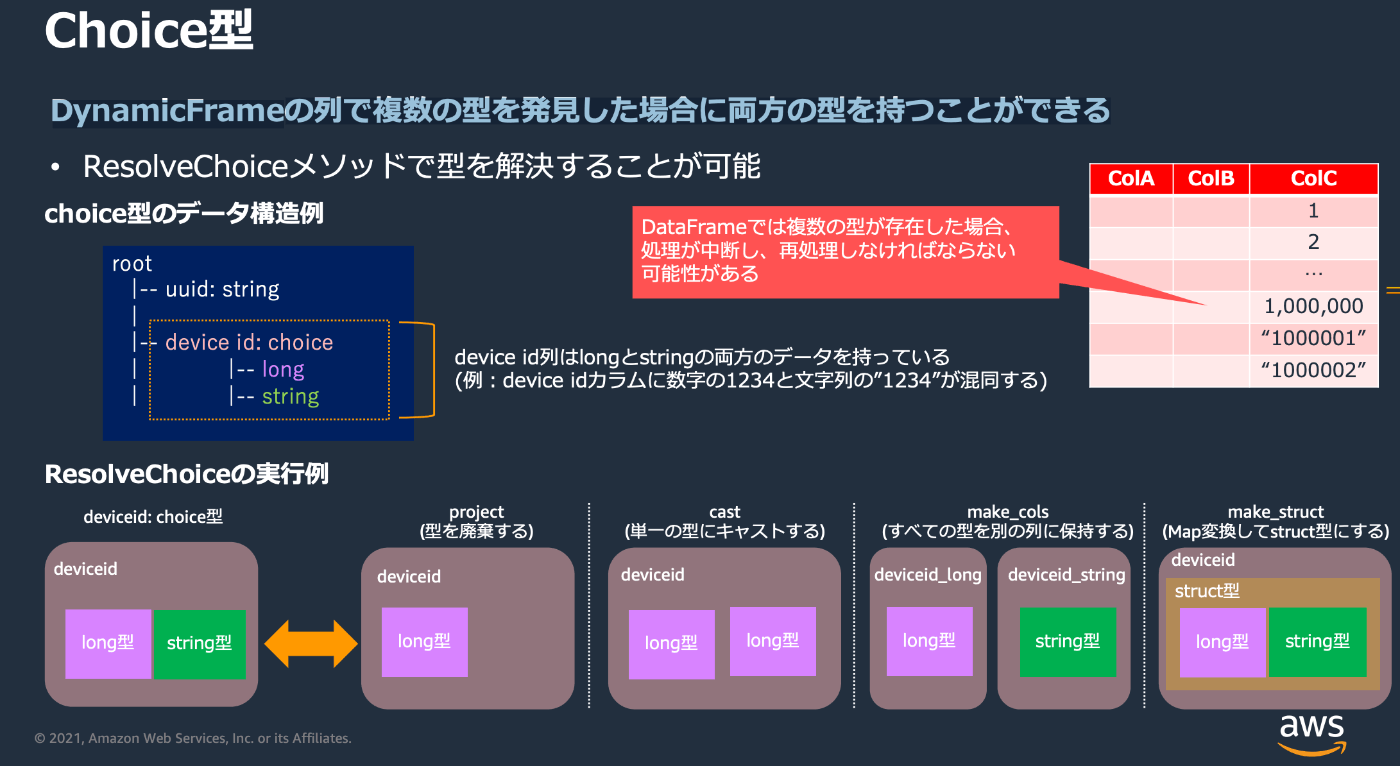

Dynamicframes Can Be Converted To And From Dataframes Using.todf () And Fromdf ().

Related Post: